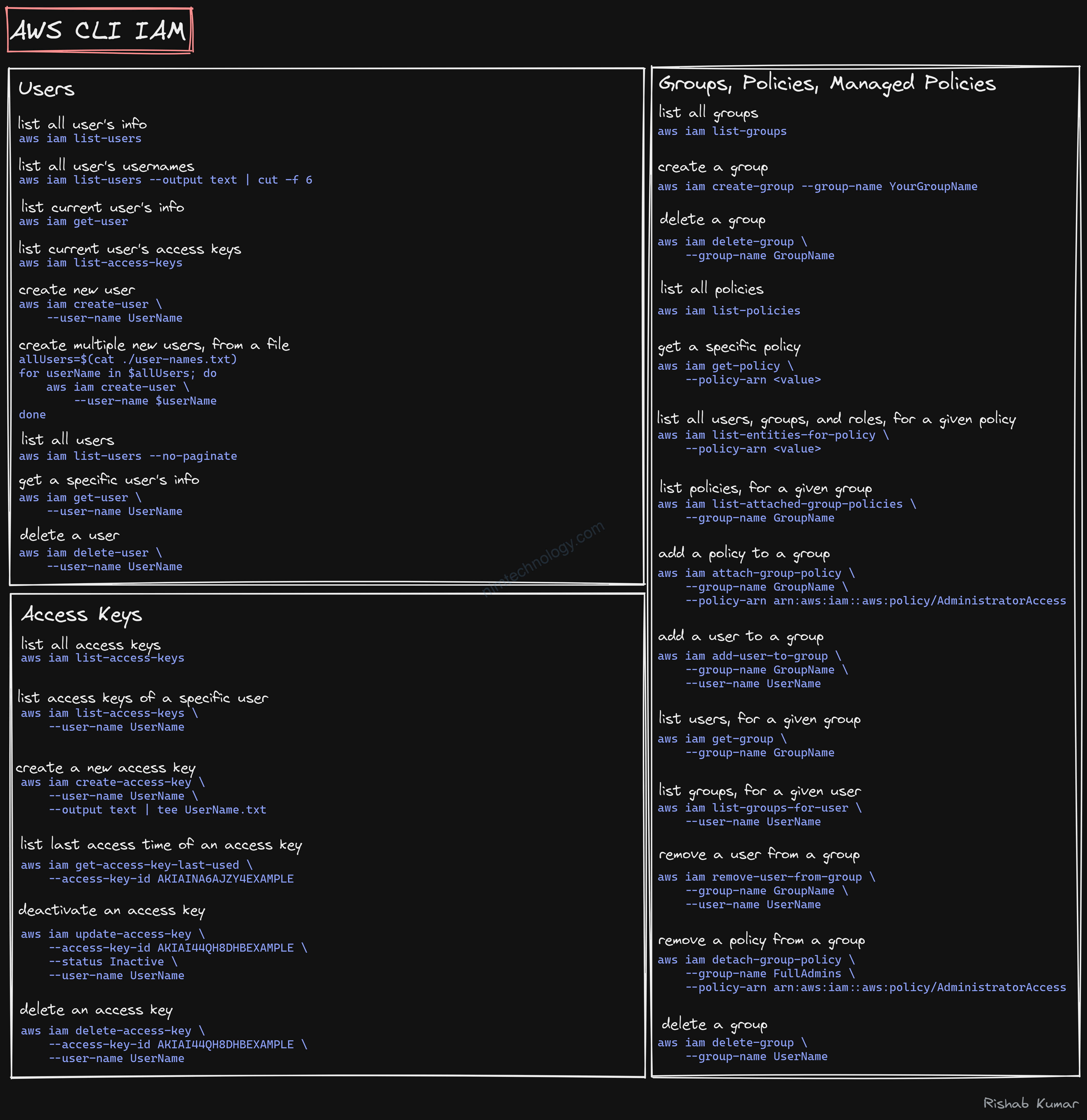

Install aws cli

apt update -y apt install unzip -y curl "https://awscli.amazonaws.com/awscli-exe-linux-x86_64.zip" -o "awscliv2.zip" unzip awscliv2.zip > /dev/null 2>&1 sudo ./aws/install > /dev/null 2>&1

vì nếu chạy bằng script thì tạo nhiều log ở stdout nền bạn có thể sử dụng /dev/null 2>&1 ở cuối command.

https://askubuntu.com/questions/474556/hiding-output-of-a-command

No such file or directory: ‘less’: ‘less’

[Errno 2] No such file or directory: 'less': 'less' Exited with code exit status 255

https://github.com/aws/aws-cli/issues/5038#issue-575848470

sudo apt-get update && sudo apt-get install -yy lessaws ….

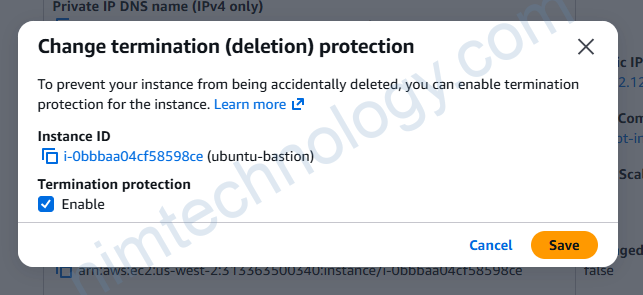

Mình sẽ note command hay sài trên aws:

>>>>> >>>>> >>>>> aws configure --profile <profile-name> aws configure list-profiles aws sts get-caller-identity --profile <profile-name> aws eks --region <region_name> update-kubeconfig --name <eks_cluster_name> --profile <profile-name> aws eks list-clusters --region <region_name> --profile <profile_name> aws eks --region <region-code> update-kubeconfig --name <cluster_name> aws eks --region us-east-1 update-kubeconfig --name SAP-dev-eksdemo

grep -rH "text" /folder

Security Group for Inbound Connection

#https://docs.aws.amazon.com/cli/latest/reference/ec2/describe-security-group-rules.html

aws ec2 describe-security-group-rules \

--filter Name="group-id",Values="sg-0ead082eb96a4cfd8" \

--profile dev-mdcl-nimtechnology-engines

aws ec2 describe-security-groups --group-ids sg-0ead082eb96a4cfd8 --profile dev-mdcl-nimtechnology-engines

aws ec2 authorize-security-group-ingress --group-id sg-0ead082eb96a4cfd8 --protocol tcp --port 443 --cidr 0.0.0.0/0 --profile dev-mdcl-nimtechnology-engines

####delete Rule in SecGroup

aws ec2 revoke-security-group-ingress --group-id sg-0ead082eb96a4cfd8 --security-group-rule-ids sgr-xxxxx --profile dev-mdcl-nimtechnology-engines --region us-west-2

AWS Configure Bash One Liner

https://stackoverflow.com/questions/34839449/aws-configure-bash-one-liner

aws configure set aws_access_key_id "AKIAI44QH8DHBEXAMPLE" --profile user2 \ && aws configure set aws_secret_access_key "je7MtGbClwBF/2Zp9Utk/h3yCo8nvbEXAMPLEKEY" --profile user2 \ && aws configure set region "us-east-1" --profile user2 \ && aws configure set output "text" --profile user2 >>>>using environment aws configure set aws_access_key_id "$AWS_ACCESS_KEY_ID" --profile user2 \ && aws configure set aws_secret_access_key "$AWS_ACCESS_KEY_SECRET" --profile user2 \ && aws configure set region "$AWS_REGION" --profile user2 \ && aws configure set output "text" --profile user2

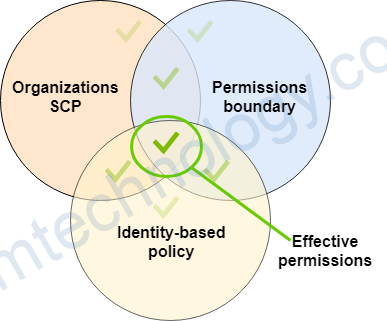

IAM

EC2

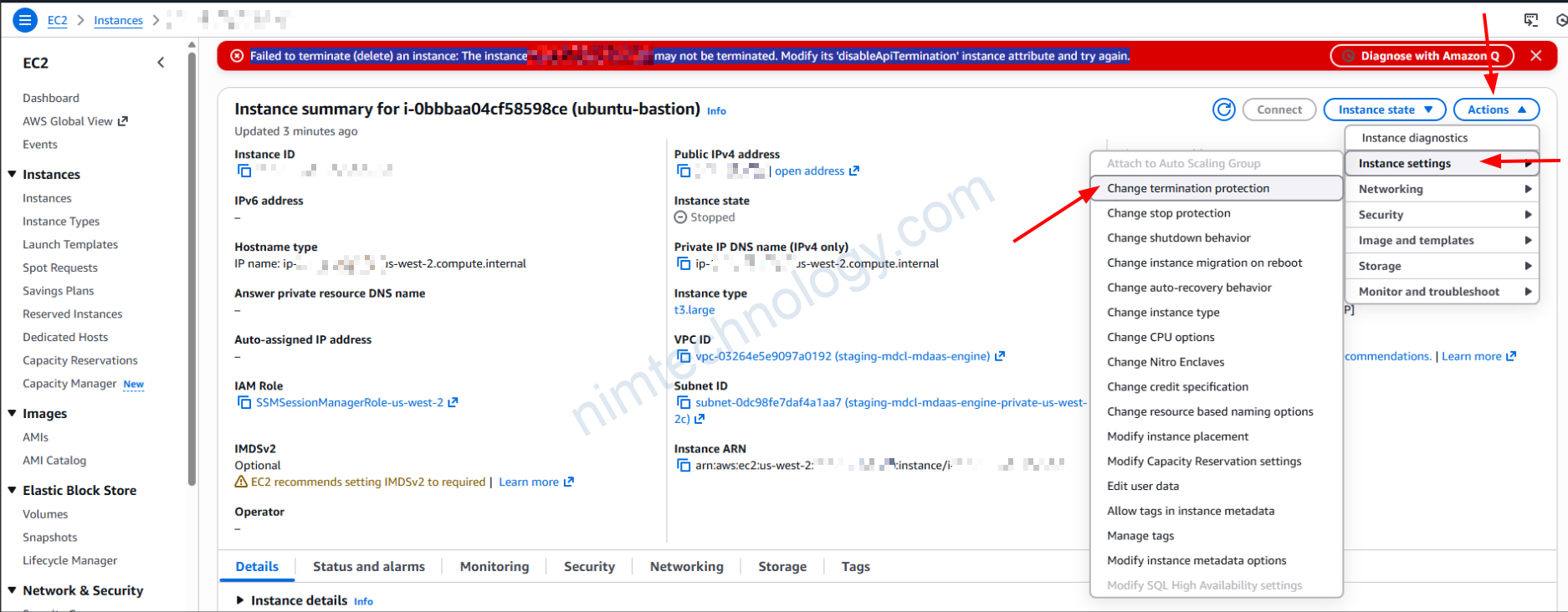

Prevent deletion of EC2:

List volume gp2:

aws ec2 describe-volumes --region us-west-2 --filters Name=volume-type,Values=gp2 \

--query "Volumes[].{Region:'us-west-2',VolumeId:VolumeId,AZ:AvailabilityZone,Size:Size,State:State,InstanceId:Attachments[0].InstanceId,Created:CreateTime}" \

--output table

EC2 instance stopped for at least 90 days has EBS volume attached

#!/bin/bash

# This script lists EC2 instances that have been stopped for more than 90 days.

# It also lists the attached EBS volumes for those instances.

echo "Checking for instances stopped > 90 days..."

printf "%-20s %-25s %-s\n" "INSTANCE_ID" "STOPPED_DATE" "VOLUME_IDS"

echo "--------------------------------------------------------------------------------"

aws ec2 describe-instances \

--filters "Name=instance-state-name,Values=stopped" \

--output json | jq -r '

now as $now |

($now - (90 * 86400)) as $cutoff |

.Reservations[].Instances[] |

# Filter for instances with a StateTransitionReason containing a date

select(.StateTransitionReason != null and (.StateTransitionReason | test("\\(\\d{4}-\\d{2}-\\d{2} \\d{2}:\\d{2}:\\d{2} GMT\\)"))) |

.StateTransitionReason as $reason |

($reason | capture("\\((?<d>\\d{4}-\\d{2}-\\d{2} \\d{2}:\\d{2}:\\d{2}) GMT\\)") | .d) as $date_str |

# Parse date to epoch

($date_str | strptime("%Y-%m-%d %H:%M:%S") | mktime) as $stop_time |

# Check if stopped before cutoff (older than 90 days)

select($stop_time <= $cutoff) |

[

.InstanceId,

$date_str,

([.BlockDeviceMappings[].Ebs.VolumeId] | join(","))

] | @tsv

' | while IFS=$'\t' read -r instance_id stop_date volume_ids; do

printf "%-20s %-25s %-s\n" "$instance_id" "$stop_date" "$volume_ids"

done

List AWS EBS volumes that are not attached to any EC2 instances

#!/bin/bash

# Script to list AWS EBS volumes that are not attached to any EC2 instance (Available state)

# Displays VolumeId as the first column.

echo "Checking for unattached (available) EBS volumes..."

# Print custom header

printf "%-25s %-10s %-15s %-20s %-25s\n" "VolumeId" "Size(GB)" "Status" "AvailabilityZone" "CreateTime"

echo "-----------------------------------------------------------------------------------------------"

# Use aws ec2 describe-volumes with filter for status=available

# We use --output text and a list projection [...] to strictly preserve column order

# Then we use 'column -t' (if available) or just let the shell formatting handle it,

# but simply printing strictly ordered text is the best way to control column order.

aws ec2 describe-volumes \

--filters Name=status,Values=available \

--query "Volumes[*].[VolumeId, Size, State, AvailabilityZone, CreateTime]" \

--output text | \

while read -r vol_id size state zone time; do

printf "%-25s %-10s %-15s %-20s %-25s\n" "$vol_id" "$size" "$state" "$zone" "$time"

done

echo "Done."

Elastic IP address without a resource associated

aws ec2 describe-addresses --query "Addresses[?AssociationId==null].{PublicIp:PublicIp, AllocationId:AllocationId, Domain:Domain}" --output table

ELBv1 does not have listeners

#!/bin/bash

echo "=== AWS ELBv1 (Classic Load Balancer) Report ==="

# 1. List ALL ELBv1 Load Balancers

echo -e "\n1. Listing ALL Classic Load Balancers:"

aws elb describe-load-balancers \

--query "LoadBalancerDescriptions[*].{LoadBalancerName:LoadBalancerName, DNSName:DNSName, ListenerCount:length(ListenerDescriptions)}" \

--output table

# 2. List ELBv1 Load Balancers with NO Listeners

echo -e "\n2. Listing Classic Load Balancers with NO listeners:"

aws elb describe-load-balancers \

--query "LoadBalancerDescriptions[?length(ListenerDescriptions)==\`0\`].{LoadBalancerName:LoadBalancerName, DNSName:DNSName, CreatedTime:CreatedTime}" \

--output table

echo -e "\nDone."

ELBv2 does not have listeners

#!/bin/bash

echo "Checking ELBv2 (ALB/NLB) for missing listeners..."

printf "%-50s %-20s %-15s\n" "LoadBalancerName" "Type" "Status"

echo "--------------------------------------------------------------------------------------"

# Get all ELBv2 ARNs, Names, and Types

# Format: Arn|Name|Type

aws elbv2 describe-load-balancers \

--query "LoadBalancers[].[LoadBalancerArn, LoadBalancerName, Type]" \

--output text | while read -r arn name lb_type; do

# Check listener count for this specific Load Balancer

listener_count=$(aws elbv2 describe-listeners --load-balancer-arn "$arn" --query "length(Listeners)" --output text 2>/dev/null)

# If count is 0 or empty, print it

if [[ "$listener_count" == "0" || -z "$listener_count" ]]; then

printf "%-50s %-20s %-15s\n" "$name" "$lb_type" "NO LISTENERS"

fi

done

echo "Done."

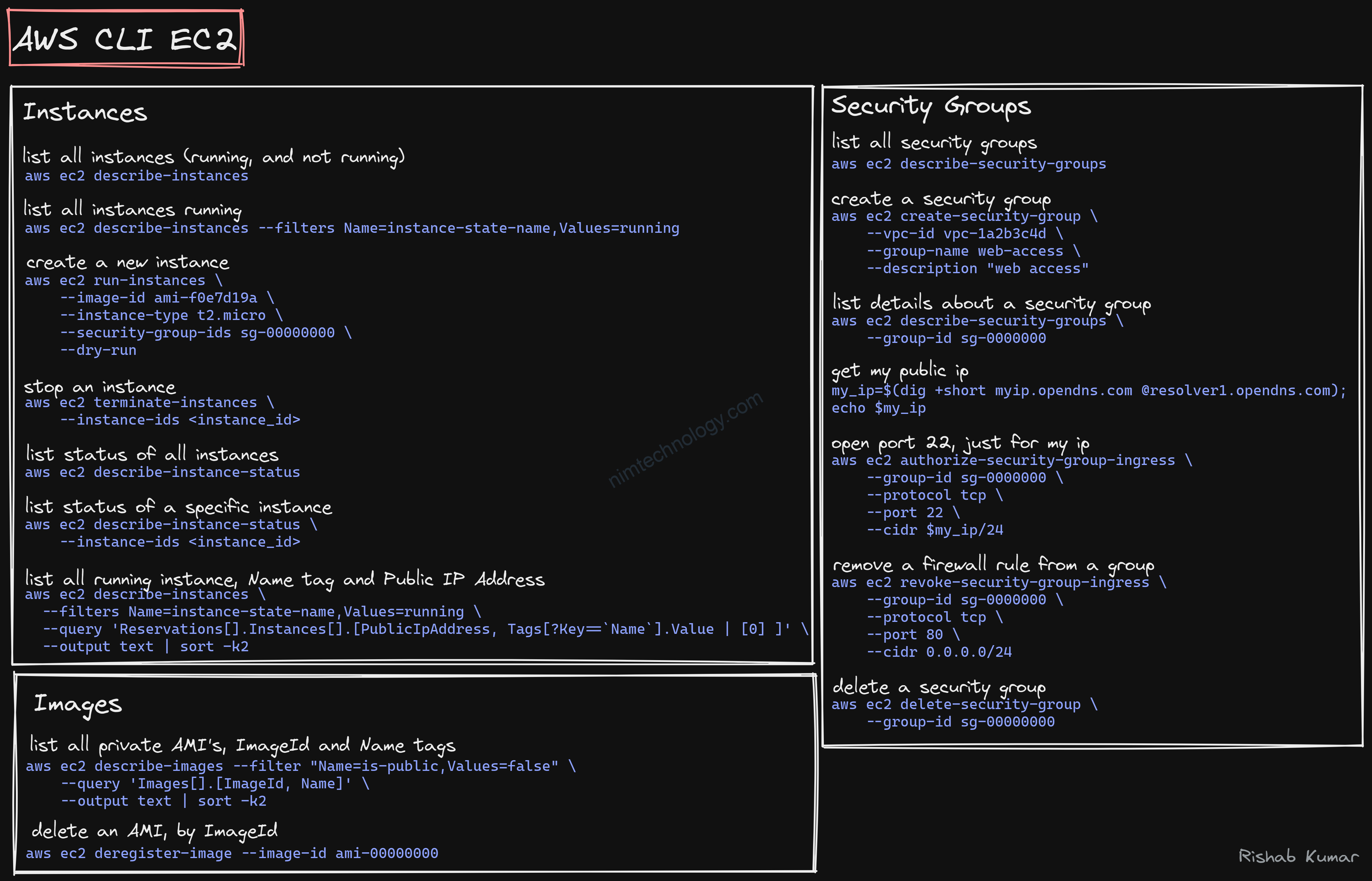

s3

https://github.com/rishabkumar7/cloud-cheat-sheets

>>>>> https://stackoverflow.com/questions/27932345/downloading-folders-from-aws-s3-cp-or-sync

Using aws s3 cp from the AWS Command-Line Interface (CLI) will require the --recursive parameter to copy multiple files.

aws s3 cp --recursive s3://myBucket/dir localdir

The aws s3 sync command will, by default, copy a whole directory. It will only copy new/modified files.

aws s3 sync s3://mybucket/dir localdir

Just experiment to get the result you want.

Documentation:

check folder size on S3

aws s3 ls s3://<bucket_name>/<folder_name>/ --recursive --summarize --human-readable

example: aws s3 ls s3://nim-dataset-of-rainmaker/avtest/ --recursive --summarize --human-readable

Checksum S3 files by Golang

https://github.com/aws-samples/amazon-s3-checksum-tool

S3 express one zone

copy file from s3 standard to s3 express one zone

root@LE11-D7891:~# time aws s3 cp s3://artifactory/1GB.txt s3://demo-s3-express-one-zone--usw2-az1--x-s3/1GB-v3.txt

copy: s3://artifactory/1GB.txt to s3://demo-s3-express-one-zone--usw2-az1--x-s3/1GB-v3.txt

real 0m32.645s

user 0m0.905s

sys 0m0.094s

copy file from s3 express one zone to s3 standard

time aws s3 cp s3://demo-s3-express-one-zone--usw2-az1--x-s3/1GB.txt s3://artifactory-nim/1GB-v5.txt --storage-class ONEZONE_IA --copy

-props none

copy: s3://demo-s3-express-one-zone--usw2-az1--x-s3/1GB.txt to s3://artifactory-nim/1GB-v5.txt

real 0m14.737s

user 0m0.872s

sys 0m0.087s

Find S3 Bucket BucketKey is disabled

list-s3-bucketkey-disabled.sh

#!/usr/bin/env bash

set -euo pipefail

usage() {

cat <<'EOF'

Usage: list-s3-bucketkey-disabled.sh [--kms-only]

Lists S3 buckets whose default encryption rules have the bucket key disabled.

Requires awscli and jq.

Options:

--kms-only Restrict results to rules using aws:kms algorithms.

EOF

}

kms_only=0

while [[ $# -gt 0 ]]; do

case "$1" in

-h|--help)

usage

exit 0

;;

--kms-only)

kms_only=1

;;

*)

echo "Unknown option: $1" >&2

usage >&2

exit 1

;;

esac

shift

done

get_bucket_region() {

local bucket="$1"

local location

if ! location=$(aws s3api get-bucket-location \

--bucket "$bucket" \

--query 'LocationConstraint' \

--output text 2>/dev/null); then

echo "" # unknown/forbidden

return

fi

case "$location" in

None|"") echo "us-east-1" ;;

EU) echo "eu-west-1" ;;

*) echo "$location" ;;

esac

}

buckets=$(aws s3api list-buckets \

--query 'Buckets[].Name' \

--output text 2>/dev/null || true)

if [[ -z "$buckets" ]]; then

echo "No buckets returned or access denied." >&2

exit 1

fi

printf "%-40s %-15s %-20s %-60s %s\n" "Bucket" "Region" "SSEAlgorithm" "KMSKeyId" "BucketKeyEnabled"

printf "%-40s %-15s %-20s %-60s %s\n" "------" "------" "------------" "--------" "----------------"

found=0

for bucket in $buckets; do

region=$(get_bucket_region "$bucket")

if [[ -z "$region" ]]; then

echo "Warning: skipping bucket '$bucket' (unable to determine region)." >&2

continue

fi

if ! encryption_json=$(aws s3api get-bucket-encryption \

--bucket "$bucket" \

--region "$region" \

--output json 2>/dev/null); then

# No encryption or insufficient permissions.

continue

fi

mapfile -t rules < <(

jq -r '

.ServerSideEncryptionConfiguration.Rules[]

| {

alg: .ApplyServerSideEncryptionByDefault.SSEAlgorithm,

kms: (.ApplyServerSideEncryptionByDefault.KMSMasterKeyID // "aws/s3"),

bucketKey: (.BucketKeyEnabled // false)

}

| select((.bucketKey | not))

| [ .alg, .kms, (if .bucketKey then "true" else "false" end) ]

| @tsv

' <<<"$encryption_json"

)

if [[ ${#rules[@]} -eq 0 ]]; then

continue

fi

for rule in "${rules[@]}"; do

IFS=$'\t' read -r alg kms bucket_key <<<"$rule"

if [[ $kms_only -eq 1 && $alg != aws:kms* ]]; then

continue

fi

printf "%-40s %-15s %-20s %-60s %s\n" "$bucket" "$region" "$alg" "$kms" "$bucket_key"

found=1

done

done

if [[ $found -eq 0 ]]; then

if [[ $kms_only -eq 1 ]]; then

echo "No S3 buckets found with aws:kms encryption and bucket keys disabled." >&2

else

echo "No S3 buckets found with bucket keys disabled." >&2

fi

fiHow to run:

Run ./list-s3-bucketkey-disabled.sh for the full view, or add --kms-only to focus on KMS-encrypted buckets.Find S3 bucket does not have a lifecycle rule to delete incomplete multipart uploads

root@LE11-D7891:~# cat find_incomplete_mpu_buckets.sh

#!/bin/bash

# Print header

printf "%-50s | %-20s\n" "Bucket Name" "Status"

printf "%s\n" "---------------------------------------------------|---------------------"

# Get list of buckets

buckets=$(aws s3api list-buckets --query "Buckets[].Name" --output text)

if [ $? -ne 0 ]; then

echo "Error listing buckets. Please check your AWS credentials."

exit 1

fi

for bucket in $buckets; do

# Check lifecycle configuration

# We suppress stderr to capture specific error codes if needed, or just parse the output

lifecycle_output=$(aws s3api get-bucket-lifecycle-configuration --bucket "$bucket" --output json 2>&1)

exit_code=$?

if [ $exit_code -ne 0 ]; then

if echo "$lifecycle_output" | grep -q "NoSuchLifecycleConfiguration"; then

printf "%-50s | %-20s\n" "$bucket" "No Lifecycle Config"

elif echo "$lifecycle_output" | grep -q "AccessDenied"; then

printf "%-50s | %-20s\n" "$bucket" "Access Denied"

else

printf "%-50s | %-20s\n" "$bucket" "Error"

fi

else

# Check if AbortIncompleteMultipartUpload exists in the rules

if echo "$lifecycle_output" | grep -q "AbortIncompleteMultipartUpload"; then

# Found the rule, so this bucket is fine. We skip printing it or print "OK" if verbose.

# User asked to find those *without* the rule.

continue

else

printf "%-50s | %-20s\n" "$bucket" "Missing MPU Rule"

fi

fi

doneSecrets Manager.

https://www.learnaws.org/2022/08/28/aws-cli-secrets-manager/#how-to-list-all-secrets

ECR

Retagging an image: https://docs.aws.amazon.com/AmazonECR/latest/userguide/image-retag.html

You can retag without pulling or pushing the image with Docker.

Mình có tìm được 1 script của 1 anh trai:

Để chạy được script trên thì:

chmod +x ecr_add_tag.shSource the Script: You can source the script into your shell so that you can use the ecr-add-tag function directly:

source ecr_add_tag.shHow to Use the Function

After sourcing the script, you can use the function ecr-add-tag in your terminal as follows:

ecr-add-tag ECR_REPO_NAME TAG_TO_FIND TAG_TO_ADD [AWS_PROFILE]ECR_REPO_NAME: The name of the ECR repository.TAG_TO_FIND: The existing tag of the image you want to re-tag.TAG_TO_ADD: The new tag you want to add to the image.[AWS_PROFILE]: (Optional) The AWS profile to use. If not provided, the default profile is used.

Example:

ecr-add-tag my-ecr-repo 1.0.0 1.0.1 my-aws-profileScript to be easier

#!/usr/bin/env bash

# Disable AWS CLI pager

export AWS_PAGER=""

# Check for the correct number of arguments

if (( $# < 3 )); then

echo "Wrong number of arguments. Usage: $0 ECR_REPO_NAME TAG_TO_FIND TAG_TO_ADD [AWS_PROFILE]"

exit 1

fi

# Parse the arguments

repo_name=$1

existing_tag=$2

new_tag=$3

profile=$4

# If a profile is provided, format it correctly

[[ ! -z "$profile" ]] && profile="--profile ${profile}"

# Fetch the existing image manifest

manifest=$(aws ecr batch-get-image ${profile} \

--repository-name $repo_name \

--image-ids imageTag=$existing_tag \

--query 'images[].imageManifest' \

--output text)

# Add the new tag to the image

aws ecr put-image ${profile} \

--repository-name $repo_name \

--image-tag $new_tag \

--image-manifest "${manifest}"

Sau đó bạn run:

bash ecr-add-tag.sh alpine/terragrunt 1.1.7-eks 1.1.7-eks.v6 nim-devAWS CLi Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

workload.user.cattle.io/workloadselector: apps.deployment-nim-engines-dev-aws-cli

name: aws-cli

namespace: nim-engines-dev

spec:

replicas: 1

selector:

matchLabels:

workload.user.cattle.io/workloadselector: apps.deployment-nim-engines-dev-aws-cli

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

labels:

workload.user.cattle.io/workloadselector: apps.deployment-nim-engines-dev-aws-cli

namespace: nim-engines-dev

spec:

containers:

- command:

- /bin/sh

- '-c'

- while true; do sleep 3600; done

env:

- name: AWS_ACCESS_KEY_ID

value: XXXXXXXXXXX

- name: AWS_SECRET_ACCESS_KEY

value: XXXXXXXXXXXXXXXL0eXPPXXXXHUU1bW

- name: AWS_DEFAULT_REGION

value: us-west-2

image: amazon/aws-cli:latest

imagePullPolicy: Always

name: aws-cli

resources: {}

securityContext:

allowPrivilegeEscalation: false

privileged: false

readOnlyRootFilesystem: false

runAsNonRoot: false

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /app/downloaded

name: file-service

dnsPolicy: ClusterFirst

nodeSelector:

kubernetes.io/os: linux

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

terminationGracePeriodSeconds: 30

volumes:

- name: file-service

persistentVolumeClaim:

claimName: pvc-file-service-smb-1

ELB

Find ELBv2 instances without listeners.

#!/usr/bin/env bash

set -euo pipefail

usage() {

cat <<'EOF'

Usage: list-empty-elbv2.sh [--all-regions] [region ...]

--all-regions Query every active AWS region (overrides other region args).

region Explicit region(s) to query (space separated).

If no regions are provided, the script uses AWS_REGION (space separated list allowed),

falling back to us-east-1.

EOF

}

regions=()

while [[ $# -gt 0 ]]; do

case "$1" in

-h|--help)

usage

exit 0

;;

--all-regions)

mapfile -t regions < <(

aws ec2 describe-regions \

--query 'Regions[].RegionName' \

--output json |

jq -r '.[]'

)

;;

-*)

echo "Unknown option: $1" >&2

usage >&2

exit 1

;;

*)

regions+=("$1")

;;

esac

shift

done

if [[ ${#regions[@]} -eq 0 ]]; then

if [[ -n "${AWS_REGION:-}" ]]; then

# Allow AWS_REGION to contain a space- or comma-separated list.

IFS=' ,'

read -r -a regions <<< "${AWS_REGION}"

unset IFS

else

regions=(us-east-1)

fi

fi

# Deduplicate while preserving order.

declare -A seen=()

unique_regions=()

for region in "${regions[@]}"; do

[[ -z "$region" ]] && continue

if [[ -z "${seen[$region]:-}" ]]; then

seen["$region"]=1

unique_regions+=("$region")

fi

done

if [[ ${#unique_regions[@]} -eq 0 ]]; then

echo "No regions to query." >&2

exit 1

fi

printf "%-20s %-40s %s\n" "Region" "LoadBalancerName" "LoadBalancerArn"

printf "%-20s %-40s %s\n" "------" "------------------" "----------------"

found=0

for region in "${unique_regions[@]}"; do

mapfile -t load_balancers < <(

aws elbv2 describe-load-balancers \

--region "$region" \

--query 'LoadBalancers[].{Name:LoadBalancerName,Arn:LoadBalancerArn}' \

--output json |

jq -r '.[] | [.Name, .Arn] | @tsv'

)

if [[ ${#load_balancers[@]} -eq 0 ]]; then

continue

fi

for lb in "${load_balancers[@]}"; do

IFS=$'\t' read -r name arn <<< "$lb"

listener_count=$(aws elbv2 describe-listeners \

--region "$region" \

--load-balancer-arn "$arn" \

--query 'Listeners | length(@)' \

--output text 2>/dev/null || echo 0)

if [[ "$listener_count" == "0" || "$listener_count" == "None" ]]; then

printf "%-20s %-40s %s\n" "$region" "$name" "$arn"

found=1

fi

done

done

if [[ $found -eq 0 ]]; then

echo "No listenerless ELBv2 load balancers found." >&2

fiRun

root@LE11-D7891:~# bash ./list-empty-elbv2.sh --all-regions

Region LoadBalancerName LoadBalancerArn

------ ------------------ ----------------

No listenerless ELBv2 load balancers found.