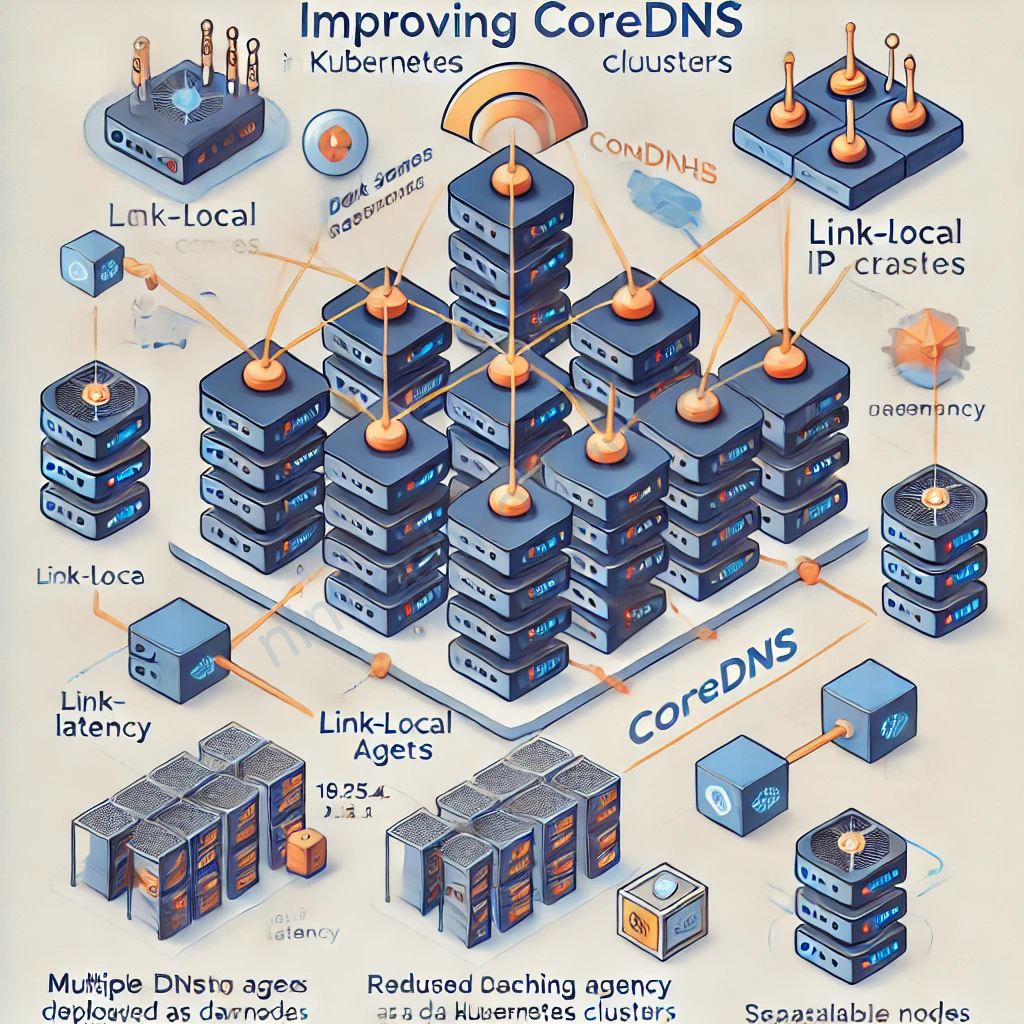

NodeLocal DNSCache

NodeLocal DNSCache enhances Kubernetes cluster DNS performance by deploying a DNS caching agent on each node as a DaemonSet. This setup allows Pods to query a local DNS cache on the same node, reducing latency and avoiding potential bottlenecks associated with centralized DNS services.

curl -sL https://raw.githubusercontent.com/kubernetes/kubernetes/master/cluster/addons/dns/nodelocaldns/nodelocaldns.yaml \

| sed -e 's/__PILLAR__DNS__DOMAIN__/cluster.local/g' \

| sed -e "s/__PILLAR__DNS__SERVER__/$(kubectl get service --namespace kube-system kube-dns -o jsonpath='{.spec.clusterIP}')/g" \

| sed -e 's/__PILLAR__LOCAL__DNS__/169.254.20.10/g' \

| kubectl apply -f -

PILLAR__DNS__DOMAIN is “cluster.local” by default.PILLAR__LOCAL__DNS is the local listen IP address chosen for NodeLocal DNSCache.

Note: The local listen IP address for NodeLocal DNSCache can be any address that can be guaranteed to not collide with any existing IP in your cluster. It’s recommended to use an address with a local scope, for example, from the ‘link-local’ range ‘169.254.0.0/16’ for IPv4 or from the ‘Unique Local Address’ range in IPv6 ‘fd00::/8’.

Use a Link-Local IP Address

It is highly recommended to use a link-local IP address from the reserved range 169.254.0.0/16. These addresses:

- Are valid only on the local node.

- Cannot be routed externally or across nodes.

- Are ideal for ensuring traffic is confined to the node itself.

Examples of valid link-local IPs:

169.254.20.10(default)169.254.1.1- Any other address in the

169.254.0.0/16range.

Để đánh giá được node-local-dns bạn có thể quan sát metrics:

curl node-local-dns.kube-system:9253/metrics

# HELP coredns_build_info A metric with a constant '1' value labeled by version, revision, and goversion from which CoreDNS was built.

# TYPE coredns_build_info gauge

coredns_build_info{goversion="go1.22.3",revision="",version="1.11.3"} 1

# HELP coredns_cache_entries The number of elements in the cache.

# TYPE coredns_cache_entries gauge

coredns_cache_entries{server="dns://169.254.20.10:53",type="denial",view="",zones="."} 1

coredns_cache_entries{server="dns://169.254.20.10:53",type="denial",view="",zones="cluster.local."} 1

coredns_cache_entries{server="dns://169.254.20.10:53",type="denial",view="",zones="in-addr.arpa."} 1

coredns_cache_entries{server="dns://169.254.20.10:53",type="denial",view="",zones="ip6.arpa."} 1

coredns_cache_entries{server="dns://169.254.20.10:53",type="success",view="",zones="."} 0

coredns_cache_entries{server="dns://169.254.20.10:53",type="success",view="",zones="cluster.local."} 0

coredns_cache_entries{server="dns://169.254.20.10:53",type="success",view="",zones="in-addr.arpa."} 0

Cluster Proportional Autoscaler

Đây là 1 work load được sử dụng riêng cho việc scale CoreDns

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: kube-dns-autoscaler-dev

namespace: argocd

spec:

destination:

namespace: kube-system

name: 'arn:aws:eks:us-west-2:043701111869:cluster/dev-mdcl-nim-engines'

project: meta-structure

source:

repoURL: https://kubernetes-sigs.github.io/cluster-proportional-autoscaler

targetRevision: "1.1.0"

chart: cluster-proportional-autoscaler

helm:

values: |

fullnameOverride: "kube-dns-autoscaler"

nodeSelector:

kubernetes.io/os: linux

resources:

requests:

cpu: 20m

memory: 100Mi

config:

linear:

coresPerReplica: 100

nodesPerReplica: 8

min: 2

max: 20

preventSinglePointFailure: true

includeUnschedulableNodes: true

options:

namespace: kube-system

target: "deployment/coredns"

- Helm Chart:

- We’re using the Cluster Proportional Autoscaler Helm chart from the official repository:

https://kubernetes-sigs.github.io/cluster-proportional-autoscaler - chart: cluster-proportional-autoscaler

- The chart version is pinned to

1.1.0.

- We’re using the Cluster Proportional Autoscaler Helm chart from the official repository:

- Custom Values:

fullnameOverride: The autoscaler is namedkube-dns-autoscaler.nodeSelector: Ensures the autoscaler runs only on Linux nodes.resources: Resource requests are set to20mCPU and100Mimemory.config.linear:coresPerReplica: 1 replica per 100 cores.nodesPerReplica: 1 replica per 8 nodes.min: Minimum of 2 replicas.max: Maximum of 20 replicas.preventSinglePointFailure: Ensures at least 2 replicas are always running. (Nó sẽ ngăn chặn chỉ có 1 pod coredns nếu con đó ngỏm thì cả cluster ngỏm nên nó sẽ luôn là 2)includeUnschedulableNodes: Includes unschedulable nodes in scaling calculations. (nó sẽ đếm luôn các node Unschedude và các node bình thường để thực khi tính toán tổng số node của cluster)

options:- The autoscaler targets the

corednsdeployment in thekube-systemnamespace.

- The autoscaler targets the

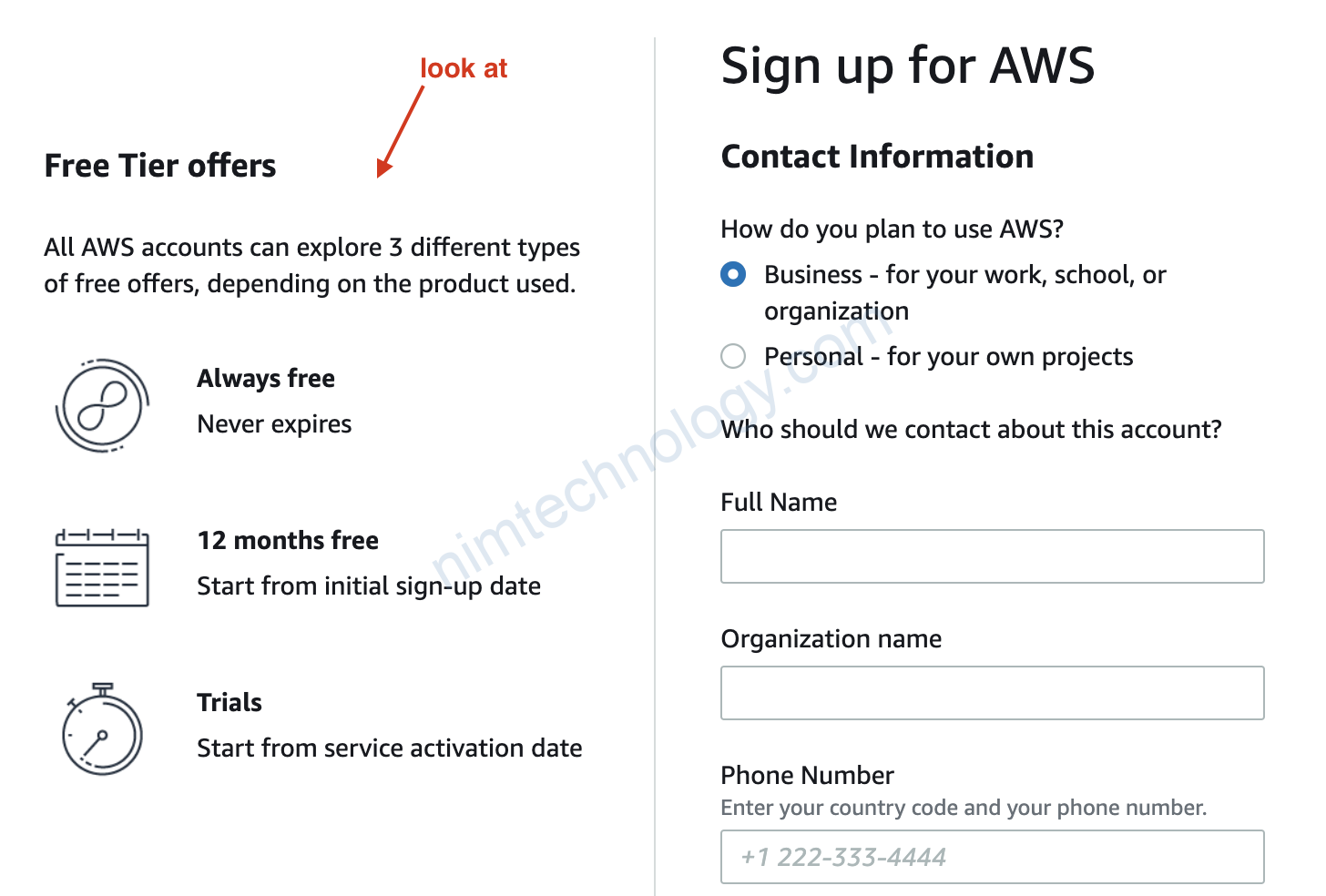

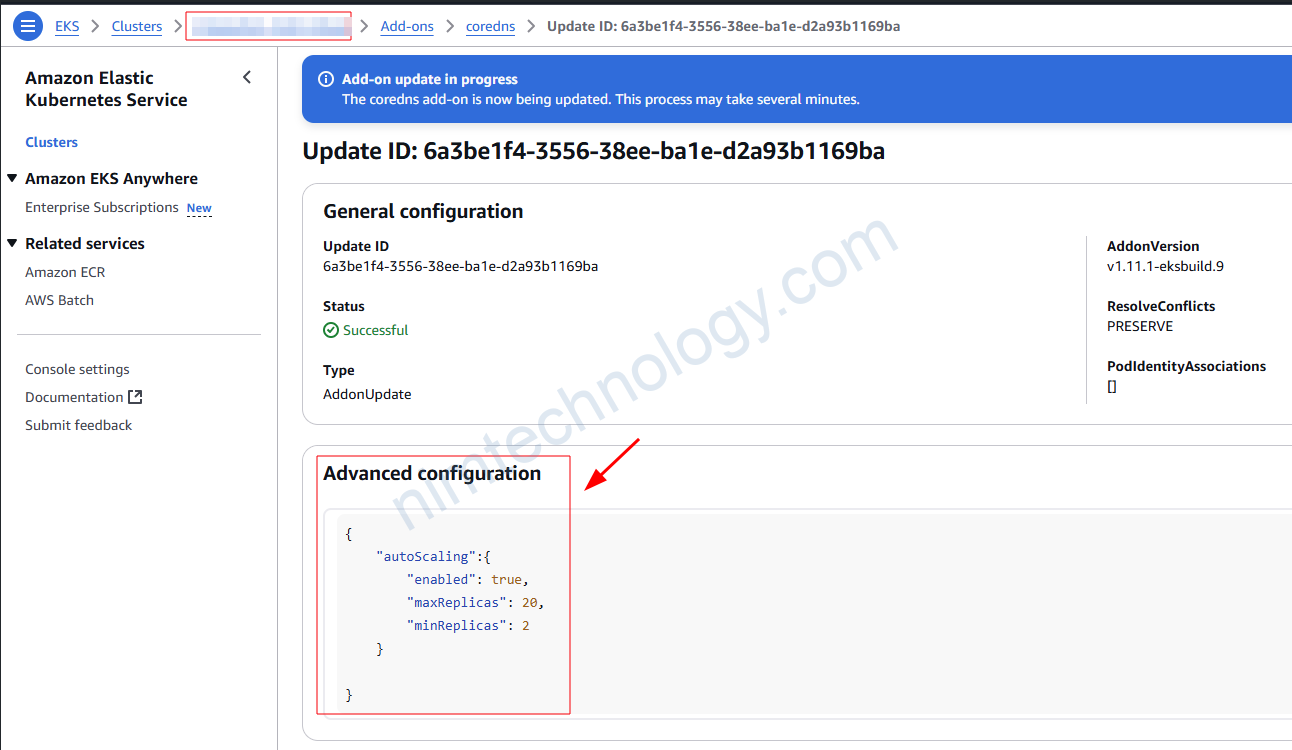

Auto Scale CoreDNS by EKS – AWS Configuration.

Trong khi bạn provision 1 eks cluster bằng terraform bạn có thể enable autoscale coredns trong cluster_addons

module "eks" {

source = "terraform-aws-modules/eks/aws"

version = "~> 20.31"

cluster_name = "example"

cluster_version = "1.31"

# Optional

cluster_endpoint_public_access = true

# Optional: Adds the current caller identity as an administrator via cluster access entry

enable_cluster_creator_admin_permissions = true

cluster_compute_config = {

enabled = true

node_pools = ["general-purpose"]

}

vpc_id = "vpc-1234556abcdef"

subnet_ids = ["subnet-abcde012", "subnet-bcde012a", "subnet-fghi345a"]

tags = {

Environment = "dev"

Terraform = "true"

}

cluster_addons = {

coredns = {

configuration_values = jsonencode({

autoScaling = {

enabled = true

minReplicas = 2 # Optional: Set the minimum number of replicas

maxReplicas = 10 # Optional: Set the maximum number of replicas

}

})

}

}

}

và cấu hình sẽ được apply như sau:

- Ngoài ra mình còn tìm được một số tài liệu nói về VPC DNS Throttling issue

https://www.rearc.io/blog/troubleshooting-intermittent-dns-resolution-issues-on-eks-clusters - How can I determine whether my DNS queries to the Amazon-provided DNS server are failing due to VPC DNS throttling? https://repost.aws/knowledge-center/vpc-find-cause-of-failed-dns-queries

- Check if DNS queries to the Amazon provided DNS server are failing due to VPC DNS throttling

https://gist.github.com/suhas316380/7399af4e7c3fb1ca2a8d39403e9a00f5